In our previous article, we discussed one barrier to adopting modeling and simulation (M&S) – the true cost of setting up in-house capabilities.

But a fundamental challenge needs to be recognized when it comes to applying Artificial Intelligence (AI), Machine Learning (ML), and data-driven models. And it’s a non-starter.

It’s not algorithms, compute power, or even talent – it’s data readiness: availability, accessibility, and quality. In the pharmaceutical industry, M&S efforts repeatedly stall because the foundational data required for purely data-driven approaches is either unavailable, untrustworthy, prohibitively expensive to generate, or fundamentally unsuitable for the decisions we need to make.

Over the past decade, AI and ML have demonstrated remarkable success in digital-native industries, where data is abundant, cheap, and continuously generated. It is tempting to assume that the same playbook applies to all industries, including pharmaceutical process development and manufacturing, where the operating environment is far different.

Data Availability

Pharmaceutical manufacturing processes are complex, highly regulated, and often developed under intense time pressure. Historical datasets are typically sparse and fragmented, collected for quality assurance or regulatory compliance and not with predictive modeling in mind. Data are distributed across laboratories, pilot plants, manufacturing sites, and contractors, typically stored in incompatible systems with limited traceability. Even when digitized data does exist, it usually reflects a relatively narrow operating window, i.e. the “safe space” during development, rather than data from the broader design space that can better train robust AI or ML models.

Data Trustworthiness

But availability alone is not enough. Trust in the data is an equally critical challenge. Pharmaceutical data are affected by numerous sources of variability: manual sampling, batch-to-batch inconsistency, equipment differences, operator effects, sensor drift, and undocumented process changes. Contextual information (why a run was performed, what constraints were present, which deviations occurred) is frequently missing or poorly recorded. Data-driven models are exceptionally good at fitting patterns, even when those patterns are accidental or misleading. Without a strong foundation of trusted, contextualized data, these models are at risk of becoming high-confidence engines with low-quality insight.

The Cost of Data Generation

The challenge becomes even more pronounced when considering the cost of data generation. Unlike digital industries where data generation is nearly free, pharmaceutical data is expensive by design. Each experiment may require weeks or months of wet and dry lab development work, costly raw materials, specialized equipment, GMP grade facilities, and highly trained personnel. Designing experiments specifically to “feed the data monster” of AI is often economically unrealistic, particularly during early development when budgets are constrained and timelines are unforgiving. The result is a fundamental mismatch between the data hunger of modern ML models and the economic realities of pharmaceutical development.

This imbalance becomes critical during scale-up and technology transfer. Generating data at manufacturing scale is not only expensive, but also often practically impossible. Much of the available data is generated at lab or pilot scale, yet the phenomena governing large-scale behavior (mixing, heat transfer, mass transfer, residence time distribution) are inherently scale-dependent. Data-driven models trained on small-scale datasets frequently fail when extrapolated to commercial manufacturing, not because the algorithms are weak, but because the underlying physics have changed. In these scenarios, collecting more of the same data does not solve the problem; it simply reinforces the wrong assumptions and gives a false sense of confidence.

All of this ultimately feeds into cost - not just the cost of experimentation, but the downstream cost of poor decision-making. A model that performs well in cross-validation yet fails during scale-up, tech transfer, or routine manufacturing, can lead to delays, rework, regulatory risk, and lost revenue. In a highly regulated industry, technical failures impact the whole business.

Improving Data Readiness

This is why mechanistic and hybrid modeling approaches are essential for the pharmaceutical industry. Mechanistic models encode first principles, mass balances, energy balances, reaction kinetics, thermodynamics, and transport phenomena, providing inherent extrapolation capability and physical interpretability. They are grounded in reality rather than correlation. Critically, they do not require massive datasets to be useful and can remain valid even when data is sparse, noisy, or imperfect.

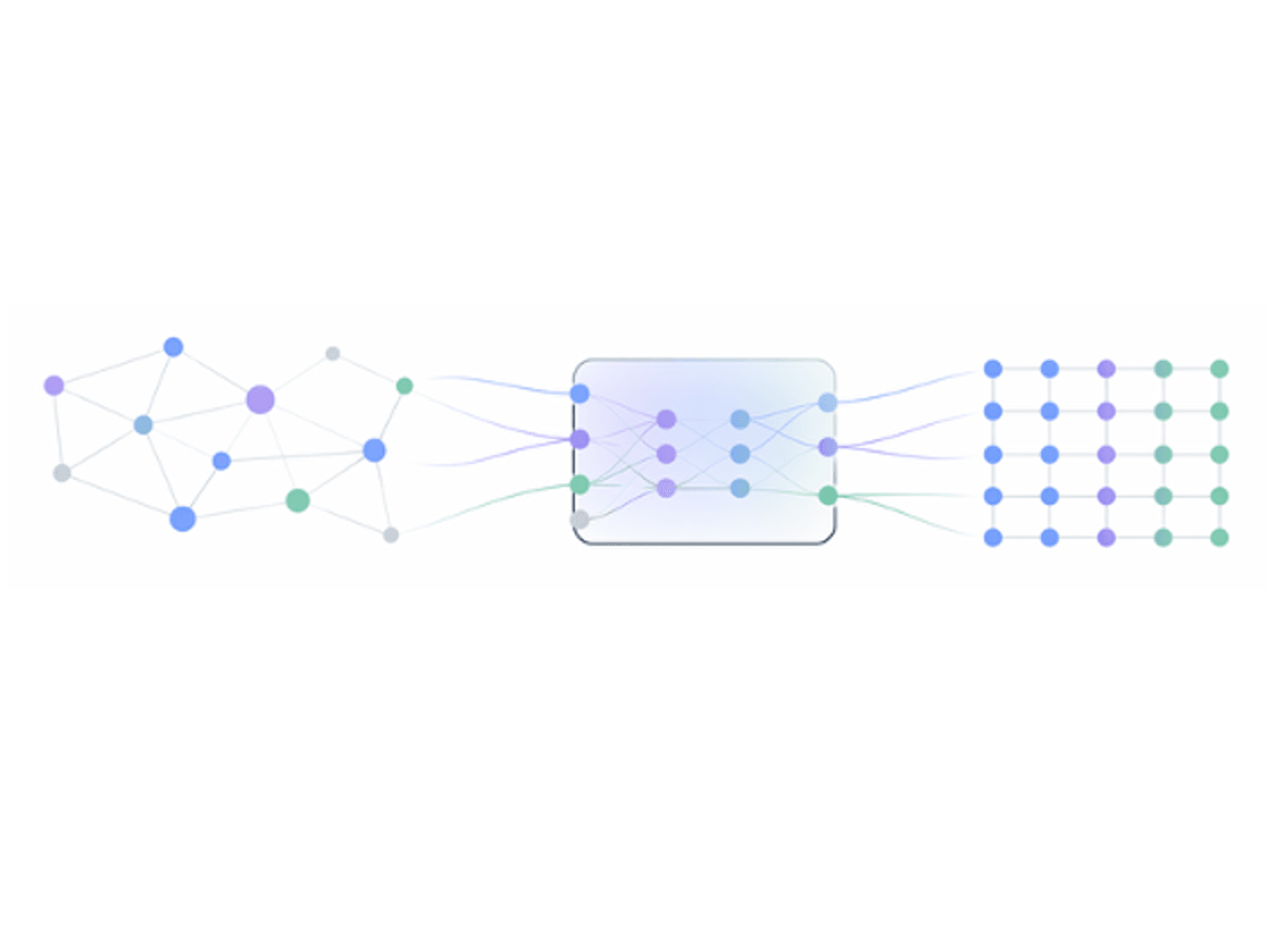

Hybrid models, digital twins, and detailed mechanistic frameworks - services offered from Procegence - combine the strengths of physics-based modeling with data-driven techniques. In these approaches, physics constrain the solution space, ensuring consistency with known laws and scale-dependent behavior, while data is used where it adds the most value: parameter estimation, model calibration, bias correction, and capturing unknown or poorly understood effects. Instead of asking data to explain everything, hybrid models ask data to complement the understanding.

The result is not just a predictive model, but a decision-ready model - one that is transparent, interpretable, scalable, and aligned with process understanding. These models support development decisions, enable more reliable scale-up, reduce experimental burden, and build confidence across technical, operational, and regulatory stakeholders.

In an industry where data is expensive, scale matters, and decisions carry true risk, the future of AI is not purely data-driven. It is physics-informed, hybrid, and purposefully designed to impress with not just accuracy metrics alone, but to deliver trustworthy insight where it matters most.

Contact the Procegence team to see how you can get started.

And in case you missed it, we invite you to check out our first blog in this series, Why Modeling and Simulation Adoption Still Stalls - Despite its Proven Value.

Next Up:

Why Modeling and Simulation Adoption Still Stalls: Cultural Resistance